Last month, I went to a talk on gradle. Today I decided to give it a shot. My goal was to create a simple groovy project with gradle. I did it in less than 30 minutes so getting started was fast.

Setup

I already had the Groovy Eclipse plugin. I then installed the Gradle plugin from the Eclipse marketplace. Yes, this could be done at the command line. I’m used to M2Eclipse IDE integration so wanted the same for Gradle. This step went as smoothly as any other plugin.

Creating a new gradle project

Just like Maven, the first step is to create a new Gradle project. Since Groovy Quickstart wasn’t in the list, I choose Java quickstart. The create request appeared to hang, being at 0% for over five minutes. This was the first (and really only) problem. I killed Eclipse and started over. There was no point in doing that. It just takes long. Apparently, this is a known issue. I tried again and after 5 minutes Gradle did download dependencies from the maven repository.

Making the Java project a Groovy project

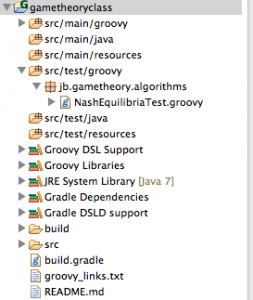

Java quickstart does exactly what it sounds like. It creates a project using the “Maven way” directory structure for Java. To adapt this to a Groovy project, I:

- hand edited build.gradle to add

apply plugin: 'groovy'

- hand edited build.gradle dependencies section to add

groovy group: 'org.codehaus.groovy', name: 'groovy', version: '1.7.10'

(I actually missed this step on the first try and got the error “error “you must assign a Groovy library to the ‘groovy’ configuration”. The code was documented here.)

- created src/main/groovy and src/test/groovy directories

- gradle > refresh source folders. This is like Maven where you need to refresh dependencies and the like to sync the Eclipse workspace.

- gradle > build > click build (compile and test)

Impressions of the gradle plugin

- I’ve mentioned a few times that it is very similar to the Maven plugin. This is great as the motions feel very familiar and only the part that is new is gradle itself. (Well that and refreshing my groovy knowledge – it’s been a while.)

- You can run your GroovyTestCase classes through Eclipse without Gradle (via run as junit test)

- My first build (with one class and one test class) including some downloading the internet took 1 minute and 2 seconds. My second build took literally two seconds.

- I like the “up to date check” so only some targets get run.

- I like that you get an Eclipse pop-up if any unit tests fail.

This blog post also motivated me to start using my github account to make it easy to show the code. In particular, the build.gradle file or the whole project. (This class doesn't require any programming so I think it is ok to put this online. If Coursera complains, I will take it down.)